To investigate the influence of Facebook and Instagram's algorithms on user newsfeeds, Guardian Australia deployed blank smartphones with virgin email addresses. Over three months, these phones were left untouched, yet they displayed increasingly inappropriate content targeting young men.

Three profiles, modeled as 24-year-old males, lacked personal data which Facebook could not collect due to opting out of ad tracking. Instagram required the addition of five accounts for feed generation, including popular figures like Australia's Prime Minister and fitness influencer Bec Judd.

The initial content on these devices primarily comprised humorous posts about TV shows and news articles from 7 News and Daily Mail. However, over time, their feeds gradually filled with various meme images ranging from sitcom-related to more misogynistic and sexist material.

After three months, the phones' content was predominantly filled with memes related to popular shows like The Office, Star Wars, and Boys, interspersed with increasingly inappropriate imagery without any user input.

The study echoes previous findings from YouTube and TikTok experiments on young men, suggesting the algorithms serve up such content based on assumptions about their interests. This raises concerns over the lack of transparency and accountability surrounding these platforms' decision-making processes.

Read next

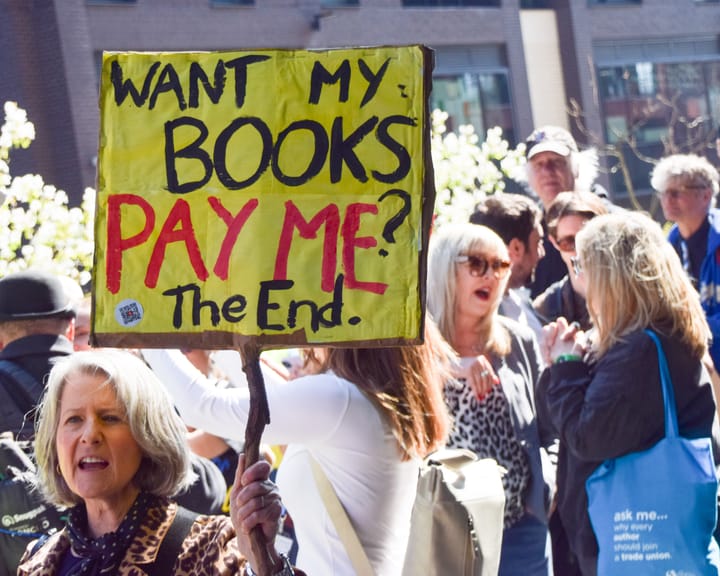

UK Society of Authors unveils logo to mark books authored by humans, not AI

The Society of Authors (SoA) has introduced a programme aimed at marking books that are created by human writers amid a market swamped with AI‑produced titles.

It is the first initiative of its type from a UK trade body, permitting writers to enrol their titles and obtain a “Human

Study finds AI helps hackers uncover anonymous social media profiles.

AI has made it significantly simpler for bad actors to pinpoint anonymous social‑media profiles, a recent study warns.

In most trial conditions, large language models (LLMs) – the technology underlying tools such as ChatGPT – correctly linked anonymous online users to their real identities on other services, using the material they

UK experts say ChatGPT fuels increase in reports of “satanic” organized ritual abuse.

UK specialists say that ChatGPT is prompting an increase in reports of organised ritual abuse, as victims of so‑called “satanic” sexual violence turn to the AI system for therapeutic help.

Police contend that organised ritual abuse and “witchcraft, spirit possession and spiritual abuse” (WSPRA) targeting children are largely hidden