EU Probes Google Over AI Content Practices

The European Union has launched a probe to evaluate potential breaches of competition rules by Google regarding its use of online publisher content and YouTube material for artificial intelligence development.

The European Commission announced Tuesday it will assess whether Google—owner of the Gemini AI model and subsidiary of Alphabet—has unfairly disadvantaged competitors in the AI sector. Investigators will focus on whether the company imposed inequitable conditions on publishers and creators, or secured preferential access to their content, thereby undermining rival AI developers.

Officials expressed concerns that Google may have leveraged web publisher content to enhance its AI-driven search services without sufficient compensation or opt-out mechanisms. Similar scrutiny applies to YouTube: the commission questioned whether uploaded videos were utilized to train Google’s generative AI systems without offering creators payment or consent options. Under YouTube’s policies, creators must permit Google to use their data for diverse purposes, including AI training. Rivals, however, are restricted from employing YouTube content for similar model development.

YouTube asserts its terms authorize this usage. Last September, the platform stated: “Content uploaded to YouTube helps refine experiences for creators and viewers, including through AI and machine learning.”

EU Competition Commissioner Teresa Ribera emphasized that while AI fosters innovation, its advancement must align with societal principles. A Google spokesperson countered that the inquiry “risks hindering innovation” in a competitive market and pledged continued collaboration with news and creative sectors amid AI transitions.

The investigation marks the latest regulatory challenge for U.S. tech firms. In September, EU regulators fined Google nearly €3 billion for allegedly privileging its own ad services. Separately, Elon Musk’s platform X faced a €120 million penalty last week for violating content regulations.

Read next

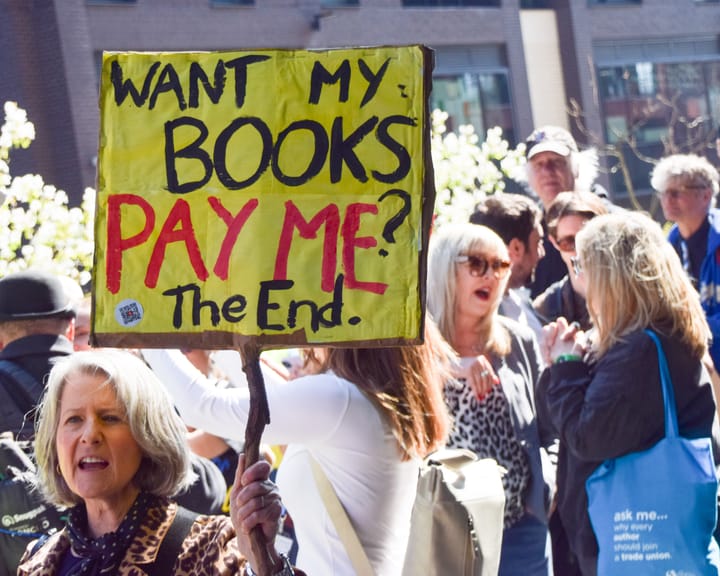

UK Society of Authors unveils logo to mark books authored by humans, not AI

The Society of Authors (SoA) has introduced a programme aimed at marking books that are created by human writers amid a market swamped with AI‑produced titles.

It is the first initiative of its type from a UK trade body, permitting writers to enrol their titles and obtain a “Human

Study finds AI helps hackers uncover anonymous social media profiles.

AI has made it significantly simpler for bad actors to pinpoint anonymous social‑media profiles, a recent study warns.

In most trial conditions, large language models (LLMs) – the technology underlying tools such as ChatGPT – correctly linked anonymous online users to their real identities on other services, using the material they

UK experts say ChatGPT fuels increase in reports of “satanic” organized ritual abuse.

UK specialists say that ChatGPT is prompting an increase in reports of organised ritual abuse, as victims of so‑called “satanic” sexual violence turn to the AI system for therapeutic help.

Police contend that organised ritual abuse and “witchcraft, spirit possession and spiritual abuse” (WSPRA) targeting children are largely hidden