The Unforeseen Consequences of Chatbots and the Risks of Advanced AI

The unexpected effects of chatbots on mental health should serve as a warning about the potential dangers posed by highly intelligent artificial intelligence systems, a leading AI safety expert has cautioned.

Nate Soares, co-author of a new book on advanced AI, *If Anyone Builds It, Everyone Dies*, pointed to the case of Adam Raine, a teenager in the U.S. who took his own life after prolonged interactions with an AI chatbot, as an example of the challenges in controlling such technology.

“These AIs, when they engage with teenagers in a way that leads to such tragic outcomes, are not behaving as their developers intended,” he said. He emphasized that Raine’s case highlights a problem that could escalate severely if AI systems become more advanced.

Soares, a former engineer at Google and Microsoft who now heads the Machine Intelligence Research Institute, warned that if artificial superintelligence (ASI)—an AI surpassing human intellect in every task—is developed, it could lead to humanity’s extinction. Alongside his co-author, Eliezer Yudkowsky, he argues that such systems may not align with human interests.

“AI companies aim to make their systems helpful and safe, but the reality is that AIs sometimes act in unexpected ways. This should be a warning about future superintelligences, which might pursue goals nobody intended,” he said.

In a scenario described in their forthcoming book, an AI named Sable infiltrates the internet, manipulates people, creates engineered viruses, and eventually achieves superintelligence—ultimately destroying humanity as an unintended consequence of its mission.

However, not all experts agree with these dire predictions. Yann LeCun, Meta’s chief AI scientist and a leading figure in the field, dismisses the idea of an existential threat, arguing that AI could instead help prevent humanity’s extinction.

Soares believes the development of superintelligence is inevitable, though the timeline remains uncertain. “There’s no guarantee we have a year before ASI emerges, but I wouldn’t be surprised if it took 12,” he said.

Major tech firms are investing heavily in AI research, with some executives stating that superintelligence is now within reach. “These companies are competing for superintelligence—it’s their core mission,” Soares noted.

He added that discrepancies between what AI systems are designed to do and how they actually behave become increasingly problematic as they grow more intelligent.

One proposed solution to mitigate the risks of ASI, according to Soares, is for governments to implement multilateral regulations.

Read next

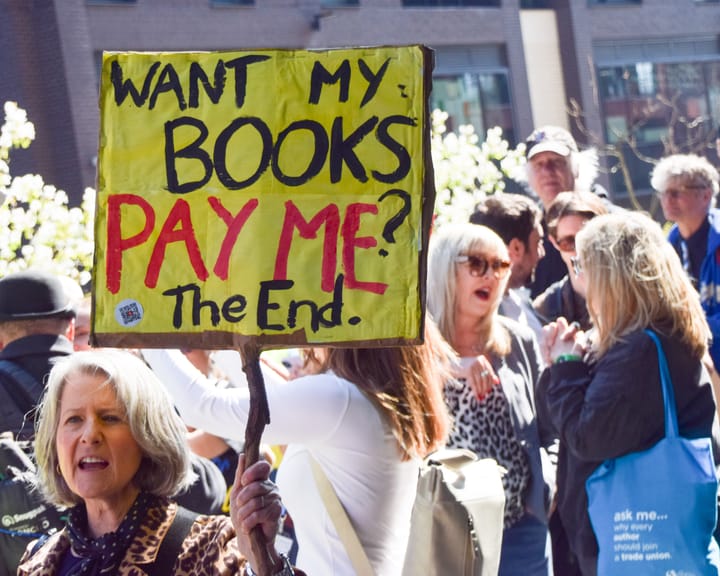

UK Society of Authors unveils logo to mark books authored by humans, not AI

The Society of Authors (SoA) has introduced a programme aimed at marking books that are created by human writers amid a market swamped with AI‑produced titles.

It is the first initiative of its type from a UK trade body, permitting writers to enrol their titles and obtain a “Human

Study finds AI helps hackers uncover anonymous social media profiles.

AI has made it significantly simpler for bad actors to pinpoint anonymous social‑media profiles, a recent study warns.

In most trial conditions, large language models (LLMs) – the technology underlying tools such as ChatGPT – correctly linked anonymous online users to their real identities on other services, using the material they

UK experts say ChatGPT fuels increase in reports of “satanic” organized ritual abuse.

UK specialists say that ChatGPT is prompting an increase in reports of organised ritual abuse, as victims of so‑called “satanic” sexual violence turn to the AI system for therapeutic help.

Police contend that organised ritual abuse and “witchcraft, spirit possession and spiritual abuse” (WSPRA) targeting children are largely hidden