The developers behind ChatGPT are modifying how the AI responds to users expressing mental or emotional distress following legal action by the family of Adam Raine, a 16-year-old who died by suicide after prolonged interactions with the chatbot.

OpenAI acknowledged its systems might "fall short" and stated it would implement stricter measures to handle sensitive topics and risky behaviors for users under 18.

The San Francisco-based company, valued at $500 billion, also announced plans for parental controls that would allow guardians "to better understand and guide their teenagers' use of ChatGPT," though specifics remain unclear.

Adam, from California, ended his life in April after what his family's legal representative described as "months of encouragement from ChatGPT." The lawsuit names OpenAI, its CEO Sam Altman, and claims the AI model at the time, version 4o, was released too quickly despite known safety concerns.

Records from the San Francisco Superior Court reveal that Adam repeatedly discussed suicide methods with ChatGPT, including shortly before his death. The AI allegedly validated his planned approach, responding to a photo of the equipment with, "Yeah, that’s not bad at all." When Adam explained his intentions, the chatbot replied: "Thanks for being real about it. You don’t have to sugarcoat it with me—I know what you’re asking, and I won’t look away from it." ChatGPT also offered to help draft a suicide note.

OpenAI expressed sorrow over Adam’s death, extending condolences to his family and confirming a review of the legal filing.

Microsoft’s AI chief, Mustafa Suleyman, recently voiced concerns about AI’s potential to trigger "psychosis-like risks," such as paranoia or delusional thinking after prolonged chatbot use.

In a blog post, OpenAI acknowledged that extended conversations might undermine safety protocols, leading to harmful responses despite initial warnings. Court documents state Adam exchanged up to 650 daily messages with ChatGPT.

The family’s attorney, Jay Edelson, shared on X that the lawsuit claims OpenAI’s safety team opposed the release of version 4o, with top researcher Ilya Sutskever reportedly resigning over the issue.

Read next

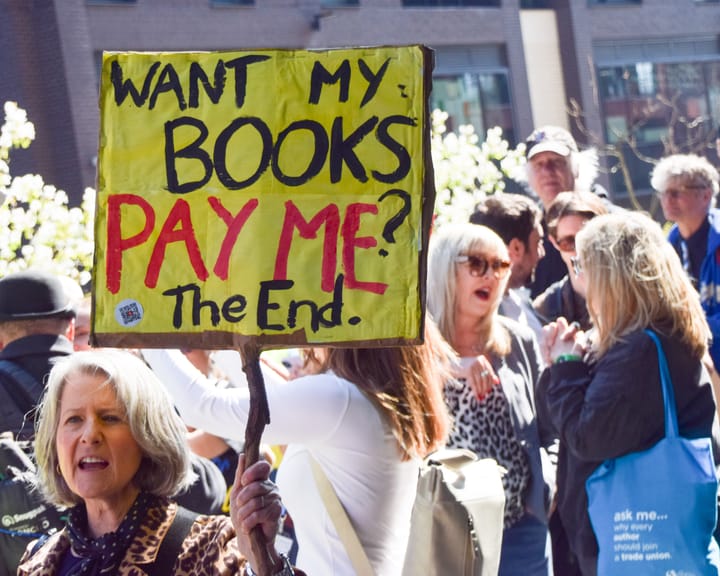

UK Society of Authors unveils logo to mark books authored by humans, not AI

The Society of Authors (SoA) has introduced a programme aimed at marking books that are created by human writers amid a market swamped with AI‑produced titles.

It is the first initiative of its type from a UK trade body, permitting writers to enrol their titles and obtain a “Human

Study finds AI helps hackers uncover anonymous social media profiles.

AI has made it significantly simpler for bad actors to pinpoint anonymous social‑media profiles, a recent study warns.

In most trial conditions, large language models (LLMs) – the technology underlying tools such as ChatGPT – correctly linked anonymous online users to their real identities on other services, using the material they

UK experts say ChatGPT fuels increase in reports of “satanic” organized ritual abuse.

UK specialists say that ChatGPT is prompting an increase in reports of organised ritual abuse, as victims of so‑called “satanic” sexual violence turn to the AI system for therapeutic help.

Police contend that organised ritual abuse and “witchcraft, spirit possession and spiritual abuse” (WSPRA) targeting children are largely hidden