It seems that people are not quite ready for digital workers just yet.

This is the lesson learned by Sarah Franklin, CEO of Lattice, an HR and performance management platform used by over 5,000 organizations globally.

So what exactly does a digital worker entail? According to Franklin, it's AI-powered characters such as Devin the engineer, Harvey the lawyer, Einstein the service agent, and Piper the sales agent who have joined the workforce alongside human colleagues but are not actual employees. These avatars were introduced by companies like Salesforce, Cognition.ai, and Qualified to replace some human tasks with AI-driven solutions.

While these digital workers can perform certain tasks, such as helping sales professionals predict revenues or assisting engineering projects through complex decision-making processes, they do not require benefits typically provided for human employees like health insurance or retirement plans.

Seeing an opportunity in this field, Franklin made the decision to embrace it and announced on July 9 that Lattice would support these digital workers as part of its services while treating them similarly to other employees.

“Today, we are making history with AI,” declared Franklin. “We will be pioneers in giving official employee status to digital workers at Lattice. Digital workers will undergo the same onboarding and training processes, have performance metrics assigned to them, receive appropriate access rights, and even a manager."

However, there was swift backlash from individuals such as Sawyer Middeleer of an AI-based sales research firm and Scott Burgess, a self-employed marketing executive. They expressed concerns about the treatment of human employees and compared the idea to viewing humans merely as "resources." The controversy was significant enough for Franklin to suspend Lattice's plans three days after announcing them.

Despite these concerns, some may argue that digital workers are an inevitable aspect of our future workforce landscape due to AI advancements and automation technologies. However, the ongoing debate and backlash serve as reminders that this topic requires further exploration and careful consideration from all stakeholders involved.

Read next

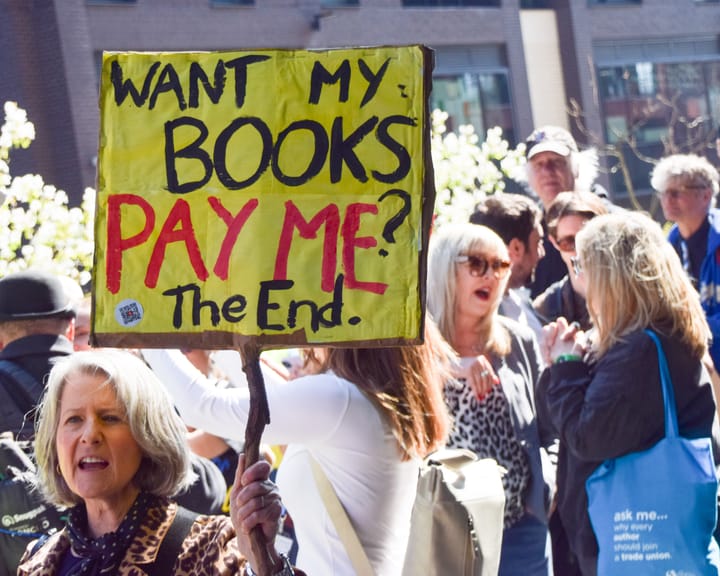

UK Society of Authors unveils logo to mark books authored by humans, not AI

The Society of Authors (SoA) has introduced a programme aimed at marking books that are created by human writers amid a market swamped with AI‑produced titles.

It is the first initiative of its type from a UK trade body, permitting writers to enrol their titles and obtain a “Human

Study finds AI helps hackers uncover anonymous social media profiles.

AI has made it significantly simpler for bad actors to pinpoint anonymous social‑media profiles, a recent study warns.

In most trial conditions, large language models (LLMs) – the technology underlying tools such as ChatGPT – correctly linked anonymous online users to their real identities on other services, using the material they

UK experts say ChatGPT fuels increase in reports of “satanic” organized ritual abuse.

UK specialists say that ChatGPT is prompting an increase in reports of organised ritual abuse, as victims of so‑called “satanic” sexual violence turn to the AI system for therapeutic help.

Police contend that organised ritual abuse and “witchcraft, spirit possession and spiritual abuse” (WSPRA) targeting children are largely hidden