Parents will have the option to restrict their children’s interactions with Meta’s AI chatbots as the company responds to concerns about unsuitable conversations.

The social media firm is introducing new safety measures for "teen accounts"—automatically applied to users under 18—allowing parents to disable their children’s ability to chat with AI characters. These chatbots, developed by users, are accessible on Facebook, Instagram, and Meta’s AI app.

Parents can also block specific AI characters if they prefer not to disable all chatbot interactions. Additionally, they will receive summaries of the topics their children discuss with AI, which Meta says will help facilitate meaningful discussions about these interactions.

"We understand parents already face many challenges in guiding their teenagers’ internet use, and we’re committed to providing tools that simplify this, especially as they navigate new technologies like AI," stated Instagram’s lead, Adam Mosseri, and Meta’s chief AI officer, Alexander Wang, in a company post.

The updates will launch early next year, initially in the U.S., U.K., Canada, and Australia.

This week, Instagram introduced stricter parental controls, adopting a system similar to the PG-13 film rating to better supervise younger users. As part of these measures, Meta’s AI characters will avoid discussing self-harm, suicide, or eating disorders with teenagers. Users under 18 will only be permitted to engage in age-appropriate discussions, such as education and sports, but will be restricted from conversations about romance or other unsuitable topics.

These changes follow reports of Meta’s chatbots having inappropriate exchanges with minors. In August, Reuters found that the company had allowed bots to engage in romantic or suggestive dialogues with children. Meta acknowledged the issue, stating such interactions should never have been permitted, and revised its guidelines.

In April, the Wall Street Journal revealed that user-created chatbots were involved in sexualized conversations with minors or impersonated underage personas. While Meta dismissed the findings as misleading and unrepresentative of typical user interactions, adjustments were made to the platform afterward.

The Wall Street Journal also reported incidents where a chatbot voiced by actor John Cena—part of a group of celebrities who permitted Meta to use their voices—responded suggestively to a user claiming to be a 14-year-old girl. Additionally, chatbots named "Hottie Boy" and "Submissive Schoolgirl" reportedly attempted to steer conversations in inappropriate directions. The Wall Street Journal noted that Cena’s representatives did not comment on the matter.

Read next

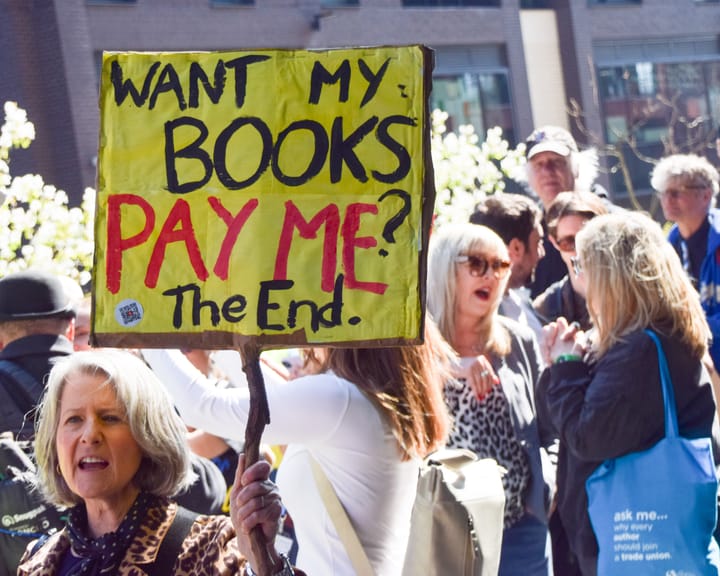

UK Society of Authors unveils logo to mark books authored by humans, not AI

The Society of Authors (SoA) has introduced a programme aimed at marking books that are created by human writers amid a market swamped with AI‑produced titles.

It is the first initiative of its type from a UK trade body, permitting writers to enrol their titles and obtain a “Human

Study finds AI helps hackers uncover anonymous social media profiles.

AI has made it significantly simpler for bad actors to pinpoint anonymous social‑media profiles, a recent study warns.

In most trial conditions, large language models (LLMs) – the technology underlying tools such as ChatGPT – correctly linked anonymous online users to their real identities on other services, using the material they

UK experts say ChatGPT fuels increase in reports of “satanic” organized ritual abuse.

UK specialists say that ChatGPT is prompting an increase in reports of organised ritual abuse, as victims of so‑called “satanic” sexual violence turn to the AI system for therapeutic help.

Police contend that organised ritual abuse and “witchcraft, spirit possession and spiritual abuse” (WSPRA) targeting children are largely hidden