Meta has announced its latest artificial intelligence model, named Llama 3.1 405B, which claims to stand shoulder to shoulder with renowned AI models from competitors like OpenAI and Anthropic across various tasks.

In a recent blog post, the company shared that this open-source system offers developers the ability to fully customize its applications for their specific needs. It allows them to train on new datasets and perform additional fine-tuning without restrictions imposed by intermediaries. This development marks a significant milestone in AI technology as it becomes accessible for direct use, without requiring payment or subjecting one's work to the control of Meta.

At present, access to Llama is limited within Meta's own platforms and available only in the United States. However, those utilizing this model will benefit from an additional layer of safety measures that are also open-source, ensuring the company cannot dictate how it should be used by others.

Meta’s co-founder, Mark Zuckerberg, expressed his belief in the importance of open-source AI for fostering a positive future. He emphasized the potential of this technology to enhance human productivity, creativity and quality of life, as well as contributing to advancements in various fields such as medical research. By keeping AI development open-source, Zuckerberg argues that power is distributed more evenly and safely throughout society while preventing it from being concentrated among a few corporations.

Zuckerberg acknowledges the possibility of misuse by bad actors with advanced AI capabilities but posits that widespread deployment may allow for better control over such individuals, as larger entities can counterbalance their actions. While Meta has highlighted the comparable size of Llama 3.1 405B to top competitors' systems, it remains essential to conduct fair assessments between this model and others like GPT-4 to confirm if its effectiveness matches those currently leading in AI technology.

Read next

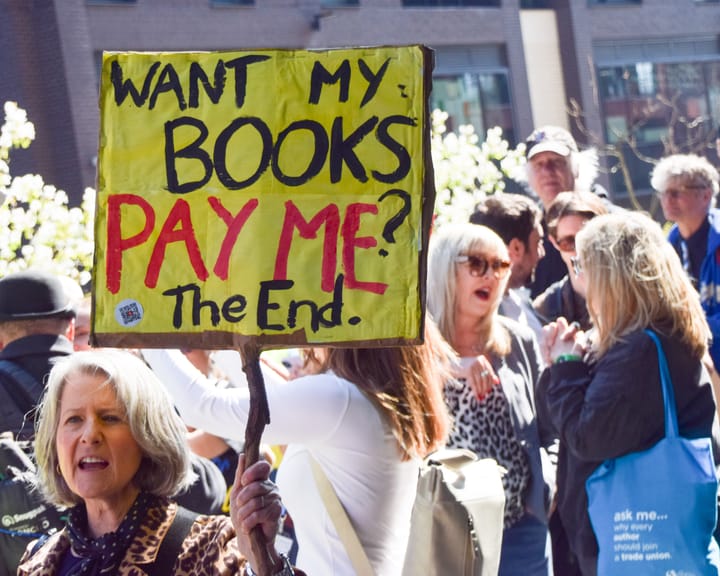

UK Society of Authors unveils logo to mark books authored by humans, not AI

The Society of Authors (SoA) has introduced a programme aimed at marking books that are created by human writers amid a market swamped with AI‑produced titles.

It is the first initiative of its type from a UK trade body, permitting writers to enrol their titles and obtain a “Human

Study finds AI helps hackers uncover anonymous social media profiles.

AI has made it significantly simpler for bad actors to pinpoint anonymous social‑media profiles, a recent study warns.

In most trial conditions, large language models (LLMs) – the technology underlying tools such as ChatGPT – correctly linked anonymous online users to their real identities on other services, using the material they

UK experts say ChatGPT fuels increase in reports of “satanic” organized ritual abuse.

UK specialists say that ChatGPT is prompting an increase in reports of organised ritual abuse, as victims of so‑called “satanic” sexual violence turn to the AI system for therapeutic help.

Police contend that organised ritual abuse and “witchcraft, spirit possession and spiritual abuse” (WSPRA) targeting children are largely hidden