Microsoft has introduced an artificial intelligence system that demonstrates superior performance to human physicians in diagnosing complex medical conditions, signaling a potential shift toward advanced medical intelligence.

The company's AI team, led by British technology expert Mustafa Suleyman, created a system that mimics the decision-making of expert medical panels when handling intricate cases. In collaboration with OpenAI’s latest AI model, the system successfully resolved more than 80% of specially selected case studies. In contrast, practicing physicians—working without external resources—accurately diagnosed only 20% of the same cases.

The system also proved more efficient in recommending medical tests, potentially reducing costs compared to traditional diagnostic methods. Microsoft emphasized that AI would assist rather than replace doctors, noting that physicians play a broader role in patient care, including building trust and managing uncertainty—areas where AI falls short.

While acknowledging the system’s capabilities, Microsoft cautioned against overestimating AI’s diagnostic abilities based on standardized medical exams, arguing that such tests rely heavily on memorization rather than deep comprehension. The company stated that its model operates similarly to real-world clinicians, following a structured process involving patient inquiries and diagnostic tests before finalizing conclusions. For example, analyzing symptoms like fever and cough might necessitate blood tests and imaging before determining if pneumonia is present.

For development, Microsoft’s team repurposed over 300 complex case studies from the *New England Journal of Medicine* into interactive challenges. The system integrates existing AI models from OpenAI, Meta, Anthropic, Grok, and Google’s Gemini, supplemented by a specialized "diagnostic orchestrator" that determines which tests to administer and how to interpret them.

Read next

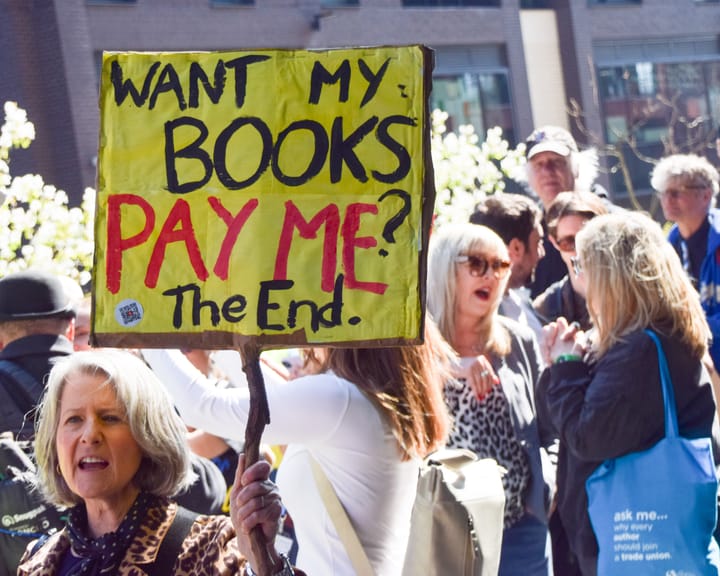

UK Society of Authors unveils logo to mark books authored by humans, not AI

The Society of Authors (SoA) has introduced a programme aimed at marking books that are created by human writers amid a market swamped with AI‑produced titles.

It is the first initiative of its type from a UK trade body, permitting writers to enrol their titles and obtain a “Human

Study finds AI helps hackers uncover anonymous social media profiles.

AI has made it significantly simpler for bad actors to pinpoint anonymous social‑media profiles, a recent study warns.

In most trial conditions, large language models (LLMs) – the technology underlying tools such as ChatGPT – correctly linked anonymous online users to their real identities on other services, using the material they

UK experts say ChatGPT fuels increase in reports of “satanic” organized ritual abuse.

UK specialists say that ChatGPT is prompting an increase in reports of organised ritual abuse, as victims of so‑called “satanic” sexual violence turn to the AI system for therapeutic help.

Police contend that organised ritual abuse and “witchcraft, spirit possession and spiritual abuse” (WSPRA) targeting children are largely hidden