During his recent visit to Washington, OpenAI’s CEO, Sam Altman, outlined a future shaped by artificial intelligence, predicting the disappearance of entire job sectors, AI-driven guidance for government leaders, and the potential use of advanced AI as a weapon by adversarial nations. At the same time, he positioned his company as a key player in shaping this technological evolution.

Speaking at the Federal Reserve’s Capital Framework for Large Banks conference, Altman stated that certain jobs would be entirely eliminated by AI progress.

“Some roles, I believe, will completely vanish,” he said, emphasizing customer support positions. “When you contact customer service now, you’re interacting with AI—and that’s acceptable.”

Altman claimed that customer service had already undergone significant transformation, telling Federal Reserve vice-chair for supervision Michelle Bowman, “Now when you call, an AI responds. It’s highly intelligent, efficient, and makes no errors—it handles everything a human agent could, without delays or missteps.”

He then shifted focus to healthcare, suggesting AI’s diagnostic skills often exceed those of human doctors, though he hesitated to endorse full reliance on AI for medical decisions.

“Today, ChatGPT generally provides more accurate diagnoses than most doctors,” he noted. “But people still prefer human physicians. Personally, I wouldn’t entrust my health solely to AI without a doctor’s oversight.”

Altman’s trip coincided with the Trump administration’s introduction of an AI strategy aimed at refining regulations and expanding data infrastructure. His engagement with federal officials marked a shift from previous years; while OpenAI and competitors had sought regulation under the Biden administration, discussions under Trump’s leadership now emphasize outpacing China in AI development.

During a public discussion, Altman expressed concerns about AI’s destructive potential, particularly the risk of adversarial nations targeting the US financial system. Despite recognizing the benefits of AI in areas like voice replication, he warned of its misuse in fraud and identity theft, noting that some institutions still use voice recognition for verification.

OpenAI and Altman have now intensified their focus on Washington, striving to influence the path of AI governance.

Read next

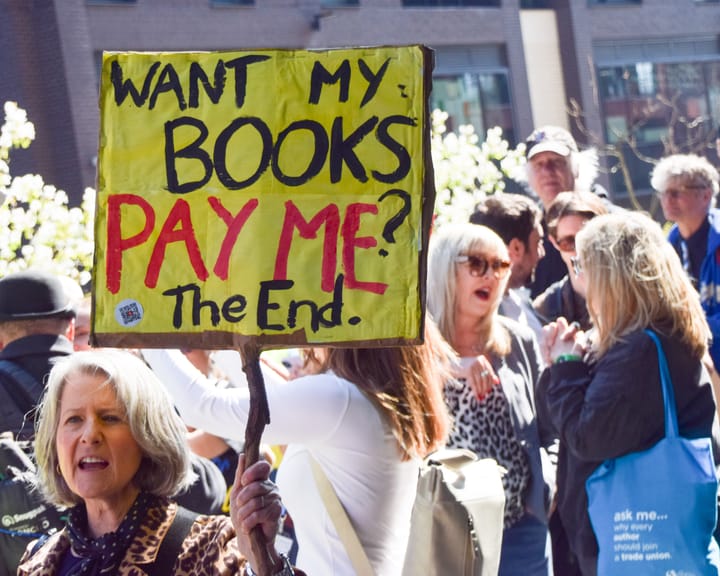

UK Society of Authors unveils logo to mark books authored by humans, not AI

The Society of Authors (SoA) has introduced a programme aimed at marking books that are created by human writers amid a market swamped with AI‑produced titles.

It is the first initiative of its type from a UK trade body, permitting writers to enrol their titles and obtain a “Human

Study finds AI helps hackers uncover anonymous social media profiles.

AI has made it significantly simpler for bad actors to pinpoint anonymous social‑media profiles, a recent study warns.

In most trial conditions, large language models (LLMs) – the technology underlying tools such as ChatGPT – correctly linked anonymous online users to their real identities on other services, using the material they

UK experts say ChatGPT fuels increase in reports of “satanic” organized ritual abuse.

UK specialists say that ChatGPT is prompting an increase in reports of organised ritual abuse, as victims of so‑called “satanic” sexual violence turn to the AI system for therapeutic help.

Police contend that organised ritual abuse and “witchcraft, spirit possession and spiritual abuse” (WSPRA) targeting children are largely hidden