Social media platforms may face new obligations under a bill backed across party lines—Labour, Conservatives, child safety experts included—to limit algorithmic content for users below the age of 16 in an effort to curb addictive behaviors and potential harm from excessive online use.

This significant legislative proposal by Labour MP Josh MacAlister has been given high priority within parliamentary discussions, with ministers set to deliberate its merits this week—an unusual step for a private member's bill that often faces an uphill battle in passing as originally drafted.

The Safer Phones Bill would also mandate the government undertake reviews on phones being sold to minors, assessing if extra technological protections should be integrated into devices intended for under-16 users—a nod towards increasingly digital upbr0ldings in children's lives.

MacAlister is gearing up this week with a meeting involving Peter Kyle, the tech secretary at present time, to potentially secure government endorsement of provisions within his bill while awaiting potential inclusion under The Online Safety Act—a significant piece of legislation aimed specifically towards child online protection.

Government officials seem receptive toward some aspects proposed in MacAlister's Safer Phones Bill, though the general sentiment leans against imposing a total prohibition on youthful phone ownership according to current belief within their ranks—a perspective shared by former Conservative education secretary Kit Malthouse and Helen Hayes as new chair of Education Select Committee.

MacAlister stresses his understanding that excessive device use has been associated with serious harms, likening this potential crisis on parking seatbelt regulations; the need for similar protective measures in our digital age is becoming increasingly urgent according to him—with numerous nations beginning to take necessary action.

Prime Minister Keir Starmer doesn't completely rule out more stringent phone controls, but he has a strong focus on maintaining parental discretion over their children’s device use and acknowledges that the current system isn't enough: “All parents are concerned about what can be accessed easily through phones.”

Peter Kyle further clarifies his initial skepticism towards stricter school phone regulations but notes a growing recognition of potential risks, emphasizing an open mindset when considering how to best safeguard our society's most vulnerable.

Meanwhile, Ofcom is in the midst of creating necessary guidelines under The Online Safety Act—though these have yet to fully address existing gaps concerning child protection online; however, they are moving towards offering some form of guidance for digital safety measures aimed at young users and their guardians.

The Safer Phones Bill proposes a significant age threshold adjustment in the realm of internet maturity—raising it from underage years to just shy of adulthood, which would potentially make acquiring consent for data collection more challenging without direct parental involvement; this could limit companies' ability to target addictive content toward children using their personal information.

In addition to these provisions, the bill sets a requirement that all schools enforce phone bans—a move intended as additional protection against excessive device use and potential associated risks in an academic setting where parents’ discretion may be limited by school policies or practicality of enforcement; this aligns with broader concerns about how children are spending increasingly more time online.

The government has clarified that they do not currently intend to pass legislation for outright bans on phones within schools—the majority already handle device control effectively, according their standpoint: “Schools understand the need and many have effective policies in place.”

Finally, MacAlister is introducing his bill at a time when several charities are urging immediate action towards further regulating online content that could be harmful to minors—specificly targeting sites promoting disordered eating or suicidal ideation; they argue for these websites being subjected to the most rigorous level of scrutiny by Ofcom under The Online Safety Act.

Read next

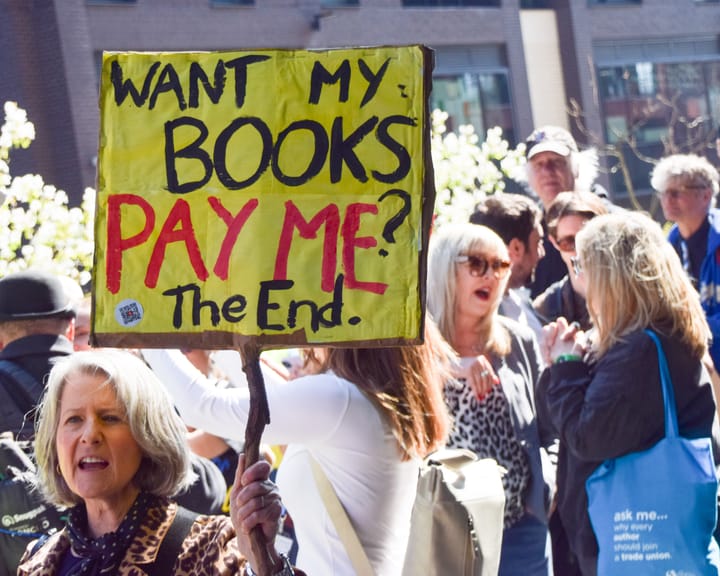

UK Society of Authors unveils logo to mark books authored by humans, not AI

The Society of Authors (SoA) has introduced a programme aimed at marking books that are created by human writers amid a market swamped with AI‑produced titles.

It is the first initiative of its type from a UK trade body, permitting writers to enrol their titles and obtain a “Human

Study finds AI helps hackers uncover anonymous social media profiles.

AI has made it significantly simpler for bad actors to pinpoint anonymous social‑media profiles, a recent study warns.

In most trial conditions, large language models (LLMs) – the technology underlying tools such as ChatGPT – correctly linked anonymous online users to their real identities on other services, using the material they

UK experts say ChatGPT fuels increase in reports of “satanic” organized ritual abuse.

UK specialists say that ChatGPT is prompting an increase in reports of organised ritual abuse, as victims of so‑called “satanic” sexual violence turn to the AI system for therapeutic help.

Police contend that organised ritual abuse and “witchcraft, spirit possession and spiritual abuse” (WSPRA) targeting children are largely hidden