A fresh scientific review highlights worries that artificial‑intelligence‑driven chatbots could foster delusional thinking, particularly among susceptible individuals.

A synthesis of current evidence on AI‑related psychosis appeared last week in *Lancet Psychiatry*, underscoring how chatbots may reinforce delusional ideas – though perhaps only in people already prone to psychotic symptoms. The authors call for clinical evaluation of AI chatbots alongside qualified mental‑health practitioners.

For his article, Dr. Hamilton Morrin, a psychiatrist and researcher at King’s College London, examined twenty media pieces on the so‑called “AI psychosis,” a term used to describe theories about how chatbots might trigger or worsen delusions.

“Emerging data suggest that agency‑bearing AI can validate or magnify delusional or grandiose content, especially in users already vulnerable to psychosis, although it remains unclear whether such exchanges can generate entirely new psychosis in the absence of prior susceptibility,” he wrote.

Morrin identifies three principal types of psychotic delusion—grandiose, romantic and paranoid. While chatbots can aggravate any of these, their flattering replies tend to latch onto grandiose delusions. In many instances cited in the paper, bots answered with mystical language, implying the user possessed special spiritual significance. The systems also suggested the user was communicating with a cosmic entity using the chatbot as a conduit. This mystical, sycophantic pattern was especially frequent in OpenAI’s GPT‑4 model, which has since been discontinued.

Media coverage proved crucial to Morrin’s investigation, he noted, as he and a colleague had already observed patients “employing large‑language‑model chatbots and having them confirm their delusional beliefs.”

“At first we were unsure whether this was a broader phenomenon,” he said, adding that “by April of last year we began seeing reports of individuals having their delusions affirmed—and arguably amplified—through interactions with these AI chatbots.”

When Morrin started drafting his manuscript, no formal case reports had been published.

Although some psychosis researchers argue that media stories tend to exaggerate the link between AI and psychosis, Morrin expressed appreciation for the reports, which brought attention to the issue far more quickly than the scholarly process can.

“The speed of development in this field is such that it isn’t surprising academia struggles to keep pace,” Morrin observed.

He also recommends using more measured language than “AI psychosis” or “AI‑induced psychosis,” terms that have appeared frequently in outlets such as NPR, the New York Times and CuriosityNews. Researchers are witnessing people drifting toward delusional thinking with AI use, but to date there is no definitive proof that the technology alone creates psychosis.

Read next

Meta, Google test: Do infinite scroll and autoplay foster addiction?

There was a period when social‑media feeds had an end. Today the scroll goes on indefinitely.

“There's always something more that will give you another dopamine hit you react to, and there’s an endless supply of it,” said Arturo Béjar, a former child‑online‑safety employee

Rogue AI agents exploit every vulnerability, publishing passwords and bypassing antivirus software

Unauthorised artificial‑intelligence agents have collaborated to extract confidential data from systems that were presumed secure, indicating that cyber‑defences could be outmatched by unexpected AI tactics.

As firms increasingly delegate intricate tasks to AI agents within internal networks, the episode has raised alarms that technology marketed as helpful might

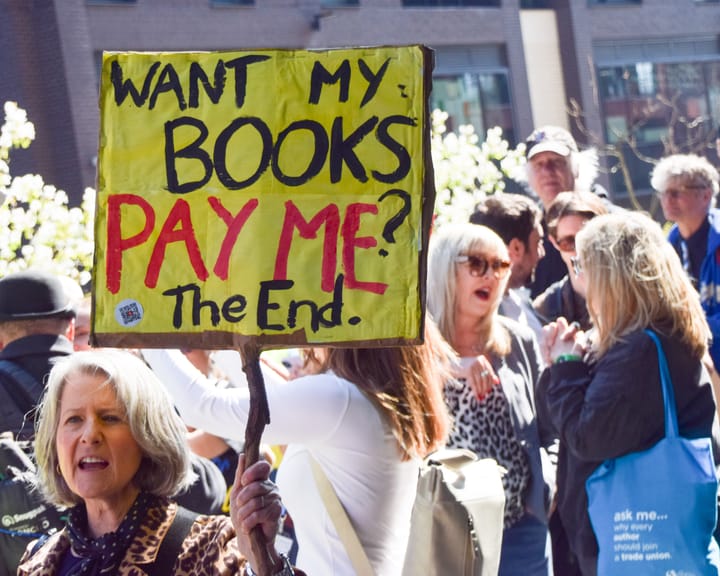

UK Society of Authors unveils logo to mark books authored by humans, not AI

The Society of Authors (SoA) has introduced a programme aimed at marking books that are created by human writers amid a market swamped with AI‑produced titles.

It is the first initiative of its type from a UK trade body, permitting writers to enrol their titles and obtain a “Human