A prominent advocate for online safety has called on the UK government to address the addictive features of social media platforms, as technology companies prepare to enforce new protections for children.

Beeban Kidron, a crossbench peer, urged Technology Secretary Peter Kyle to utilize the Online Safety Act to establish updated conduct standards regarding misinformation and digital elements that contribute to excessive online engagement among young users.

"The Secretary of State has the authority under the Online Safety Act to introduce new conduct codes," Kidron stated. "We have urgently requested action, but so far, the response has been dismissive."

Kidron emphasized that curbing the influence of platforms designed to maximize user engagement—particularly among minors—was not an overreach. "Ministers have the power to intervene and mitigate these effects—why not act now?"

Research by 5Rights, an organization led by Kidron, identified tactics that encourage compulsive platform use, such as displaying like counts, push notifications, and time-sensitive content formats like Instagram Stories.

Kidron spoke to *CuriosityNews* ahead of a 25 July deadline requiring online platforms, including Facebook, Instagram, TikTok, YouTube, X, and Google, to implement child protection measures. Pornography websites must also adopt strict age verification.

According to a study from England’s Children’s Commissioner, Dame Rachel de Souza, X is the most common source of adult content for young people. On Thursday, X announced that users who cannot confirm they are over 18 will have restricted access to sensitive material.

Dame Melanie Dawes, CEO of Ofcom, stated, "Platforms can no longer prioritize engagement over child safety. Companies must comply with age verification and protective measures—failure to do so will result in enforcement action."

Under the new rules, social media firms must prevent children from encountering pornography and harmful content promoting self-harm, suicide, or eating disorders. They must also curb violent, abusive, or bullying material.

Violations could lead to fines of up to 10% of global revenue—potentially billions for companies like Meta. In extreme cases, platforms may be blocked in the UK, and executives could face legal consequences for non-compliance.

Ofcom has detailed measures aligned with child safety requirements, including user reporting systems, enhanced content moderation, and restrictions on high-risk algorithms.

Read next

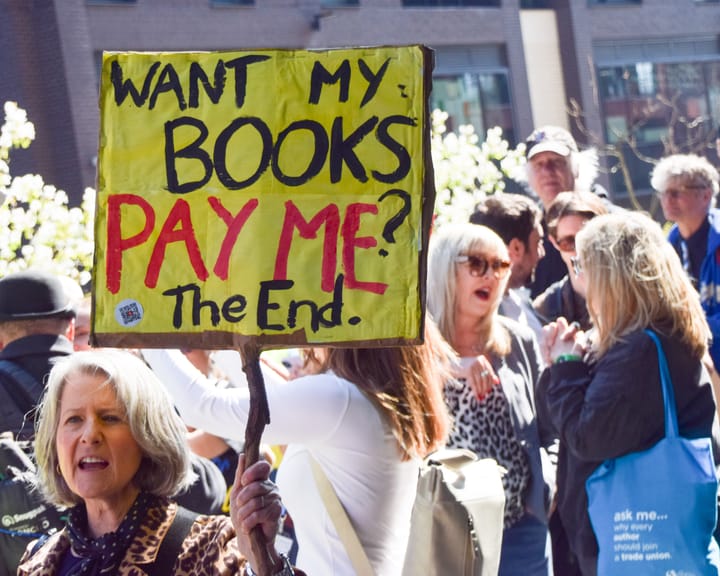

UK Society of Authors unveils logo to mark books authored by humans, not AI

The Society of Authors (SoA) has introduced a programme aimed at marking books that are created by human writers amid a market swamped with AI‑produced titles.

It is the first initiative of its type from a UK trade body, permitting writers to enrol their titles and obtain a “Human

Study finds AI helps hackers uncover anonymous social media profiles.

AI has made it significantly simpler for bad actors to pinpoint anonymous social‑media profiles, a recent study warns.

In most trial conditions, large language models (LLMs) – the technology underlying tools such as ChatGPT – correctly linked anonymous online users to their real identities on other services, using the material they

UK experts say ChatGPT fuels increase in reports of “satanic” organized ritual abuse.

UK specialists say that ChatGPT is prompting an increase in reports of organised ritual abuse, as victims of so‑called “satanic” sexual violence turn to the AI system for therapeutic help.

Police contend that organised ritual abuse and “witchcraft, spirit possession and spiritual abuse” (WSPRA) targeting children are largely hidden