U.S. Auto Safety Regulators Probe 2.88M Tesla Vehicles Over Traffic Violations

U.S. automobile safety officials have launched an investigation into 2.88 million Tesla vehicles equipped with the company's Full Self-Driving (FSD) technology following reports of traffic safety violations linked to crashes.

The National Highway Traffic Safety Administration (NHTSA) stated that Tesla’s FSD system, which requires driver supervision, had "prompted vehicle actions that breached traffic safety laws."

The preliminary evaluation by the NHTSA is the initial phase that could lead to a vehicle recall if regulators determine the technology presents a safety risk.

The agency cited instances where Tesla vehicles, operating with the FSD system engaged, reportedly ran red lights or moved against the correct lane direction during changes.

According to NHTSA, six reports described Tesla vehicles with FSD active "entering intersections against red lights and subsequently colliding with other vehicles." Four of these incidents resulted in injuries. Tesla has not yet commented on the matter.

The NHTSA also noted 18 complaints and one media report alleging that FSD-equipped Teslas failed to stop properly at red lights or incorrectly identified traffic signal states on the vehicle's display.

Some drivers reported that the system did not provide sufficient warnings before proceeding through red signals.

Tesla’s FSD, a more advanced system than its Autopilot feature, has been under NHTSA review for the past year.

In October 2024, the agency initiated an investigation into 2.4 million FSD-equipped vehicles following four collisions in low-visibility conditions, including sun glare, fog, and dust. One fatal crash occurred in 2023.

Tesla’s website states that FSD "requires an attentive driver, hands on the wheel, ready to intervene. While improvements are ongoing, the current features do not make the vehicle fully autonomous."

Read next

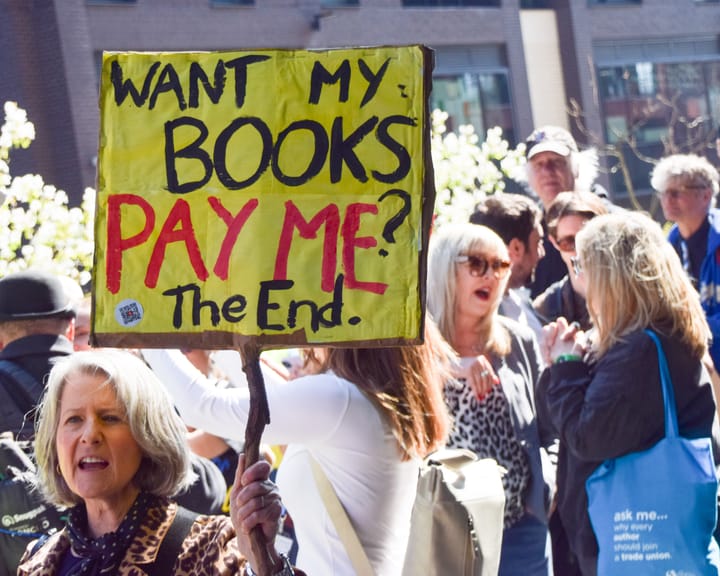

UK Society of Authors unveils logo to mark books authored by humans, not AI

The Society of Authors (SoA) has introduced a programme aimed at marking books that are created by human writers amid a market swamped with AI‑produced titles.

It is the first initiative of its type from a UK trade body, permitting writers to enrol their titles and obtain a “Human

Study finds AI helps hackers uncover anonymous social media profiles.

AI has made it significantly simpler for bad actors to pinpoint anonymous social‑media profiles, a recent study warns.

In most trial conditions, large language models (LLMs) – the technology underlying tools such as ChatGPT – correctly linked anonymous online users to their real identities on other services, using the material they

UK experts say ChatGPT fuels increase in reports of “satanic” organized ritual abuse.

UK specialists say that ChatGPT is prompting an increase in reports of organised ritual abuse, as victims of so‑called “satanic” sexual violence turn to the AI system for therapeutic help.

Police contend that organised ritual abuse and “witchcraft, spirit possession and spiritual abuse” (WSPRA) targeting children are largely hidden