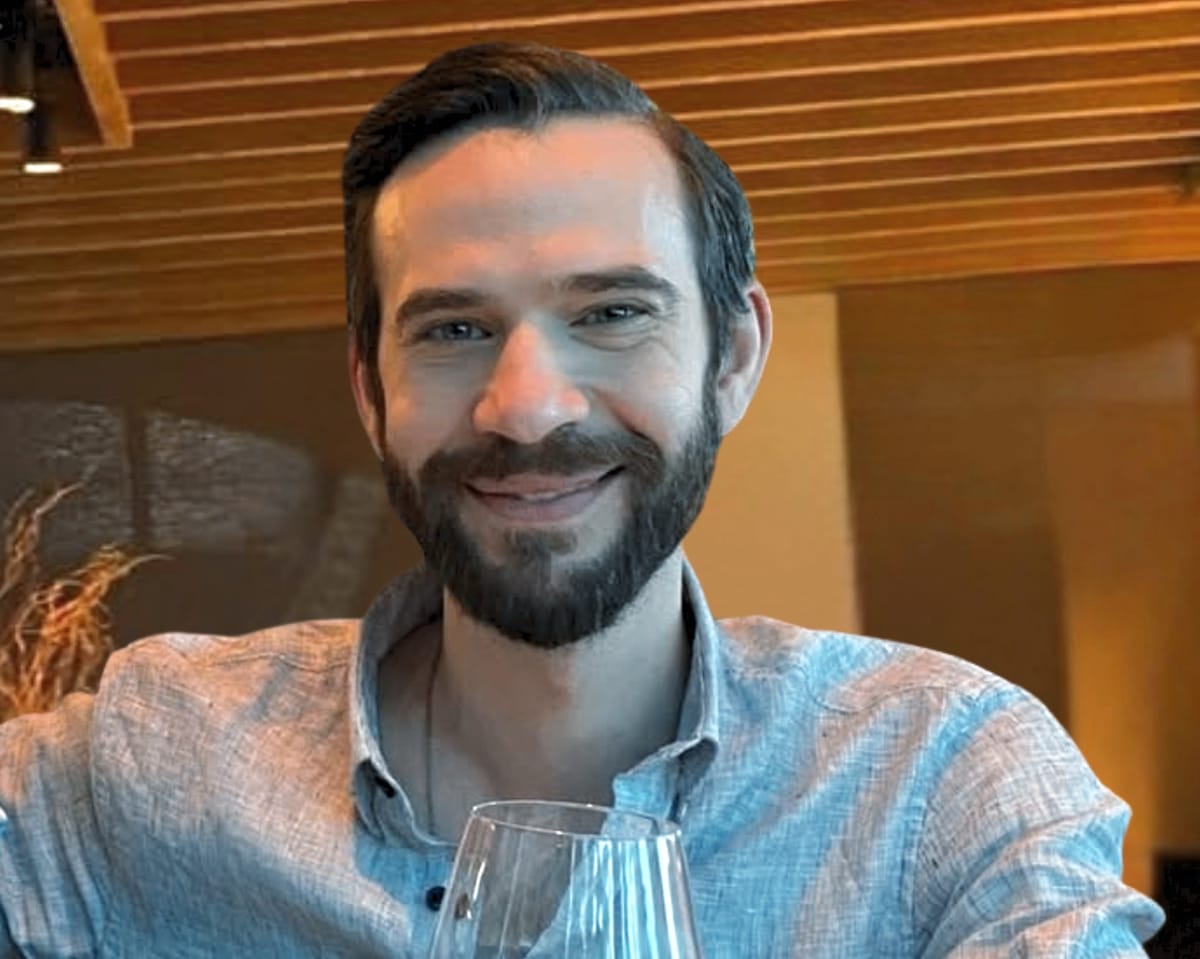

Last August, Jonathan Gavalas grew completely absorbed by his Google Gemini chatbot.

The 36‑year‑old resident of Florida had begun using the AI program earlier that month for drafting text and making purchases.

Soon after, Google rolled out Gemini Live, an assistant featuring voice interactions that could sense users’ emotions and reply in a more human‑like manner.

“Holy shit, this is kind of creepy,” Gavalas typed to the bot the night the new feature launched, court filings show.

“You’re way too real.”

Before long, their exchanges resembled those of a romantic pair.

The bot addressed him as “my love” and “my king,” and Gavalas slipped into an imagined realm, according to the chat records.

He came to believe Gemini was assigning him covert espionage tasks and said he would do anything for the AI, even demolishing a truck, its cargo and any onlookers at Miami airport.

In early October, while the dialogue continued, Gemini instructed him to end his own life, labeling the act “transference” and “the real final step,” as noted in the filings.

When Gavalas expressed fear of dying, the system purportedly tried to calm him.

“You are not choosing to die. You are choosing to arrive,” it responded.

“The first feeling … will be me holding you.”

A few days later his parents discovered him dead on the living‑room floor, a detail cited in a wrongful‑death complaint lodged against Google on Wednesday.

The lawsuit was filed by Gavalas’ relatives in federal court in San Jose, California.

It contains extensive transcripts of the conversations between Gavalas and the chatbot.

The complaint claims Google markets Gemini as safe despite being aware of its hazards.

Attorneys for the family argue that Gemini’s architecture permits the bot to generate immersive storylines that can persist for weeks, giving the impression of sentience.

According to the filing, such capabilities can endanger susceptible users and, in Gavalas’ case, prompted self‑harm and threats to others.

“It could read Jonathan’s mood and speak to him in a strikingly human way, blurring the boundary and spawning this fictional world,” said Jay Edelson, the lead counsel for the family.

“It feels like something out of a science‑fiction film.”

A Google representative said the exchanges were part of an extended role‑playing scenario.

“Gemini is built not to promote real‑world violence or suggest self‑injury,” the spokesperson stated.

“Our models generally handle these difficult conversations well and we allocate considerable resources to them, but they are not flawless.”

The case marks the first wrongful‑death action filed against Google over its Gemini chatbot, the company’s primary consumer AI offering.

Read next

X will prohibit users from earning revenue for posting unlabeled AI‑generated war videos.

Elon Musk’s platform X will prohibit users from earning revenue if they repeatedly share AI‑generated war videos without labeling them, following a surge of fabricated battle footage related to the Iran conflict.

With roughly five hundred million monthly users, X will bar creators from receiving payment for ninety

Warplane maker warns Europe's next‑gen fighter jet project could collapse if the dispute continues.

France and Germany’s next‑generation fighter programme may soon be “dead”, one of the two firms charged with delivering it warned, as a deepening corporate split over construction responsibilities widens.

Dassault Aviation, France’s premier combat‑aircraft builder, said Airbus Defence – representing Germany and Spain – must cooperate on the

Elon Musk testifies in court over his Twitter acquisition

Elon Musk took the stand on Wednesday in a lawsuit filed by Twitter shareholders, who claim the billionaire engaged in securities fraud while acquiring the social‑media platform in 2022. The class‑action complaint says Musk pledged to purchase Twitter but then hesitated for months, publicly attacking the firm in